|

Back to Blog

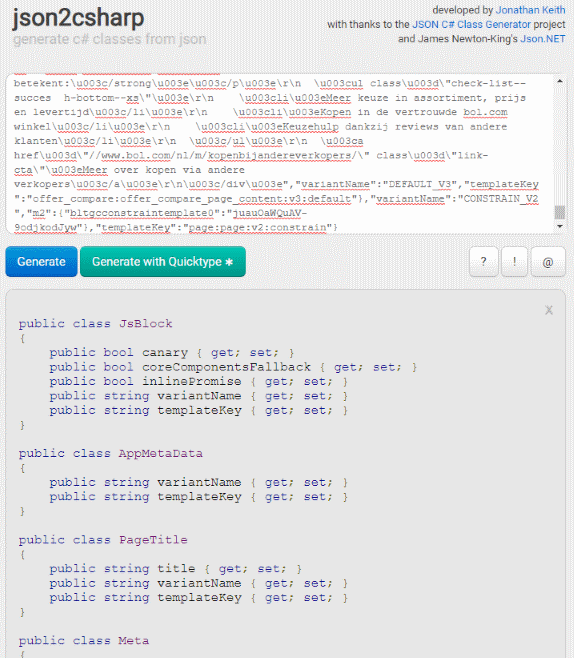

Xama soft json class generator7/3/2023

Public void WriteJson(Utf8JsonWriter writer) Public class IndexRequest : IProxyRequest Instead, let me explain briefly how this is implemented in prototype form right now, which is not that dissimilar to the current code base anyway. I won’t go into the existing code for this here as it’ll muddy the waters, and it’s not that relevant to the main point of this post. Unlike many other request types defined in the library, when sending the request to the server, we don’t want to serialise the request type itself (IndexRequest), just the TDocument object.

The IndexRequest type, therefore, includes a single Document property of a generic TDocument type. The index API accepts a simple request that includes the JSON representing the data to be indexed. One of the common scenarios consumers of the Elasticsearch client need to complete is indexing documents into Elasticsearch. Still, since I have experimented with the new feature I thought it would be helpful to share a real scenario for leveraging source generators for performance gains. This post will not be my usual deep dive style. It’s really quite brilliant as this removes most of the runtime cost of serialising to and from strongly-typed objects. The new feature in 6.x allows developers to enable source generators that perform this work ahead of time during compilation. Reflection is powerful, but it has a performance cost and can be relatively slow. Some of this is achieved through reflection, a set of APIs which let us inspect and work with Type information. During deserialisation, it must locate the correct properties to set values for. One of the jobs of a JSON library is that it must map incoming JSON onto objects. The team have harnessed this new capability to reduce the runtime cost of (de)serialisation. There are already some examples of where this can be used in the original blog post introducing the feature. Source generators offer some extremely interesting technology as part of the Roslyn compiler, allowing libraries to perform compile-time code analysis and emit additional code into the compilation target. In short, the team have leveraged source generator capabilities in the C# 9 compiler to optimise away some of the runtime costs of (de)serialisation. Instead, I recommend you read Layomi’s blog post, “ Try the new source generator“, explaining it in detail. I won’t spend time explaining the motivation for this feature here.

That brings us to the topic of today’s post, where I will briefly explore a new performance-focused feature coming in the next release of (included in. NET Core and so doesn’t require additional dependencies. In addition, it means we move to a Microsoft supported and well-maintained library, which is shipped “in the box” for most consumers who are using. Since its relatively new, it leverages even more of the latest high-performance APIs inside. Moving to in the next release has the advantage of continuing to get high-performance, low allocation (de)serialisation of our strongly-typed request and response objects. Utf8Json was initially chosen to optimise applications making a high number of calls to Elasticsearch, avoiding as much overhead as possible. Today, v7.x uses an internalised and modified variant of Utf8Json, a previous high-performance JSON library that sadly is no longer maintained. NET client, it’s my goal to switch entirely to for serialisation. In the next major release of the Elasticsearch. Since that original release, the team continue to expand the functionality of, supporting more complex user scenarios. Migrating to helped ASP.NET Core continue to improve the performance of the framework. The library was designed to be performant and reduce allocations for common scenarios. NET Core 3.0 as an in-the-box JSON serialisation library.Īt its release, was pretty basic in its feature set, designed primarily for ASP.NET Core scenarios to handle input and output formatting to and from JSON. For those unfamiliar with this library, it was released along with. In my daily work, I’m becoming quite familiar with the ins and outs of using.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed